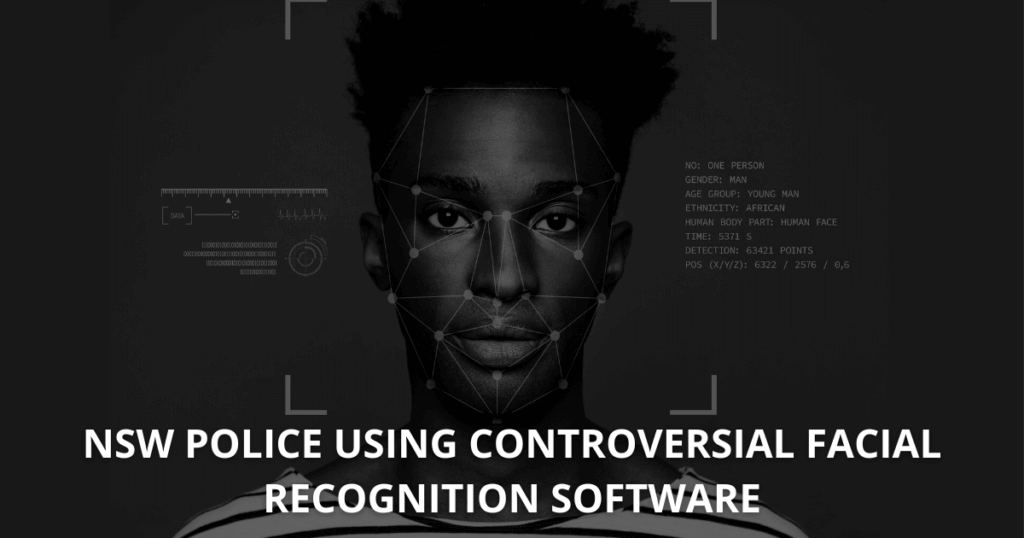

Using controversial facial recognition software, NSW Police has set their sights on serious and organised crime, saying the software has played a key role.

On August 2019, a man murdered Michaela Dunn in a Sydney CBD apartment.

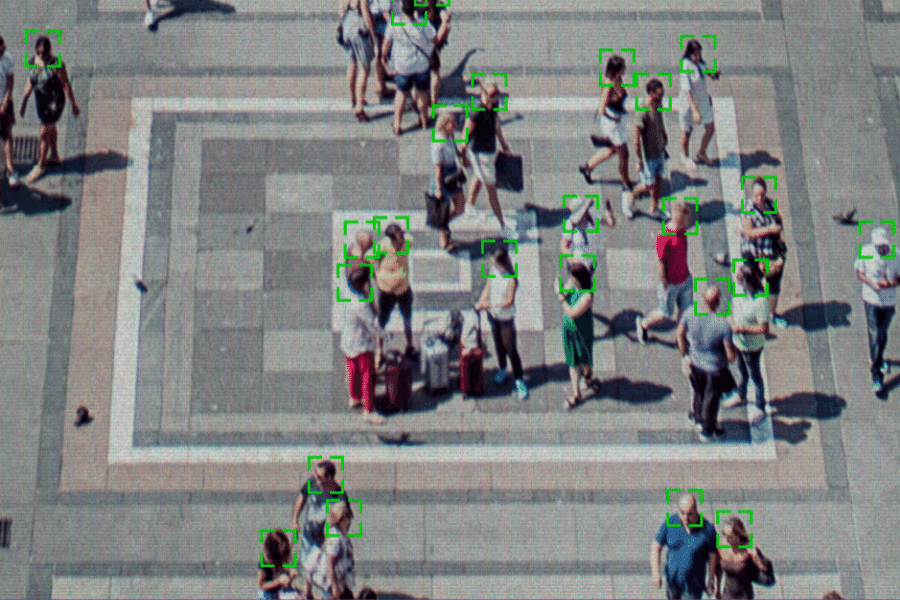

One of the urgent things that police had to do were to retrace the man’s steps. The Facial Recognition and Biometric Unit of the NSW Police used a close-up of his bumbag and face to track his movements through the city through seized CCTV footage.

The team put together a timeline of his movements in the city and on public transport.

“We used his face and that identified him, maybe 90 per cent of the time,” State Intelligence commander Assistant Commissioner Tony Crandell said.

“He actually had a pretty distinctive bumbag so we said ‘why don’t we just identify the object and track that’.

“The bumbag was picked up 100 per cent of the time.”

In what would have taken a team of four detectives four months, took the Facial Recognition and Biometric Unit three days.

Controversially capturing suspects

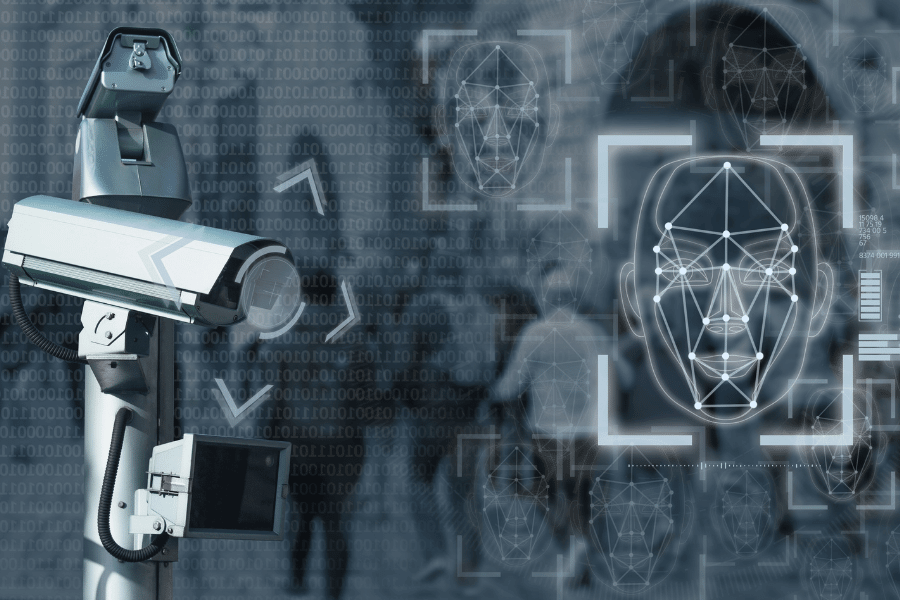

Facial recognition software can also identify a person of interest by using algorithms to compare them to mugshots stored in the police database.

Facial recognition software can also identify a person of interest by using algorithms to compare them to mugshots stored in the police database.

The software, PhotoTrac, stores one million photographs of people arrested and charged in NSW to compare to potential suspects caught on CCTV, for at least a decade.

This begs the questions of whether the computer system might disproportionately target previous offenders.

NSW Police trialled live facial technology at a gun show in a bid to identify anyone banned from holding a firearm. However, the accuracy wasn’t up to scratch.

From a social acceptance standpoint, Mr Crandell doesn’t think NSW is ready for that yet either.

“Personally I don‘t think the benefits are worth it at this point,” he said.

“That might change depending on what society‘s view is.”

Any police reach into the public sphere, where people expect to carry out their lives with a degree of autonomy, is a hard sell.

Facial recognition software under fire for racism

A study from the Massachusetts Institute of Technology (MIT) published in 2018 found that three of the biggest commercially available facial recognition softwares were up to 99 per cent accurate in relation to white men. However, errors got as high as 35 per cent when looking at darker-skinned women.

A study from the Massachusetts Institute of Technology (MIT) published in 2018 found that three of the biggest commercially available facial recognition softwares were up to 99 per cent accurate in relation to white men. However, errors got as high as 35 per cent when looking at darker-skinned women.

Maki Issa was one of six African men in a landmark racial profiling case involving the Victorian Police back in 2013.

Mr Issa said young African men already felt targeted by law authorities, and more false positives would only erode relations with the community further.

“Some young African guy commits a crime and pretty much every African person they’ve come across in the last year or so is a suspect now until they find that person,” he told SBS. “Some people get taken into a police station and get told, ‘mistake, you’re somebody else’.”

Facial recognition accuracy and false positives can be problematic

In 2017, the UK trialled a similar program, which ended up being wrong 98 per cent of the time.

David White, a cognitive psychologist at the University of NSW, said it was also important that the weaknesses of a system were not compounded by the biases of the person using it. “If you don’t pay attention to that there is a dangerous level of false positives,” he said.

Error rates can also be high when the imagery is poor, he added. “Most CCTV imagery, for example, I would class as substandard.”

“I think that’s a challenging task and it’s not just for the Australian government but a challenging task in general. In building these systems so they are as accurate as possible and building these systems so they don’t have biases against certain segments of society.”